Local search optimization has become one of the most competitive aspects of digital marketing. Agencies are constantly searching for innovative methods to identify client opportunities and strengthen their SEO strategies. A Google Maps scraper has quietly become one of the most effective tools in this space, helping marketing teams gather precise business data, assess competition, and pinpoint ranking opportunities. This isn’t about generic data scraping, it’s about using structured local data strategically to drive measurable SEO results.

1. Building Local Business Databases for SEO Outreach

When agencies handle SEO for clients, outreach is often a major challenge. It takes time to identify businesses that need optimization services or backlink partnerships. By using a local business listing scraper, marketers can quickly create categorized databases of potential clients in specific industries or regions. For instance, scraping restaurant listings can instantly reveal which ones lack websites or have inconsistent business information, valuable insights for SEO outreach.

2. Identifying Keyword Gaps Using Google Maps Data

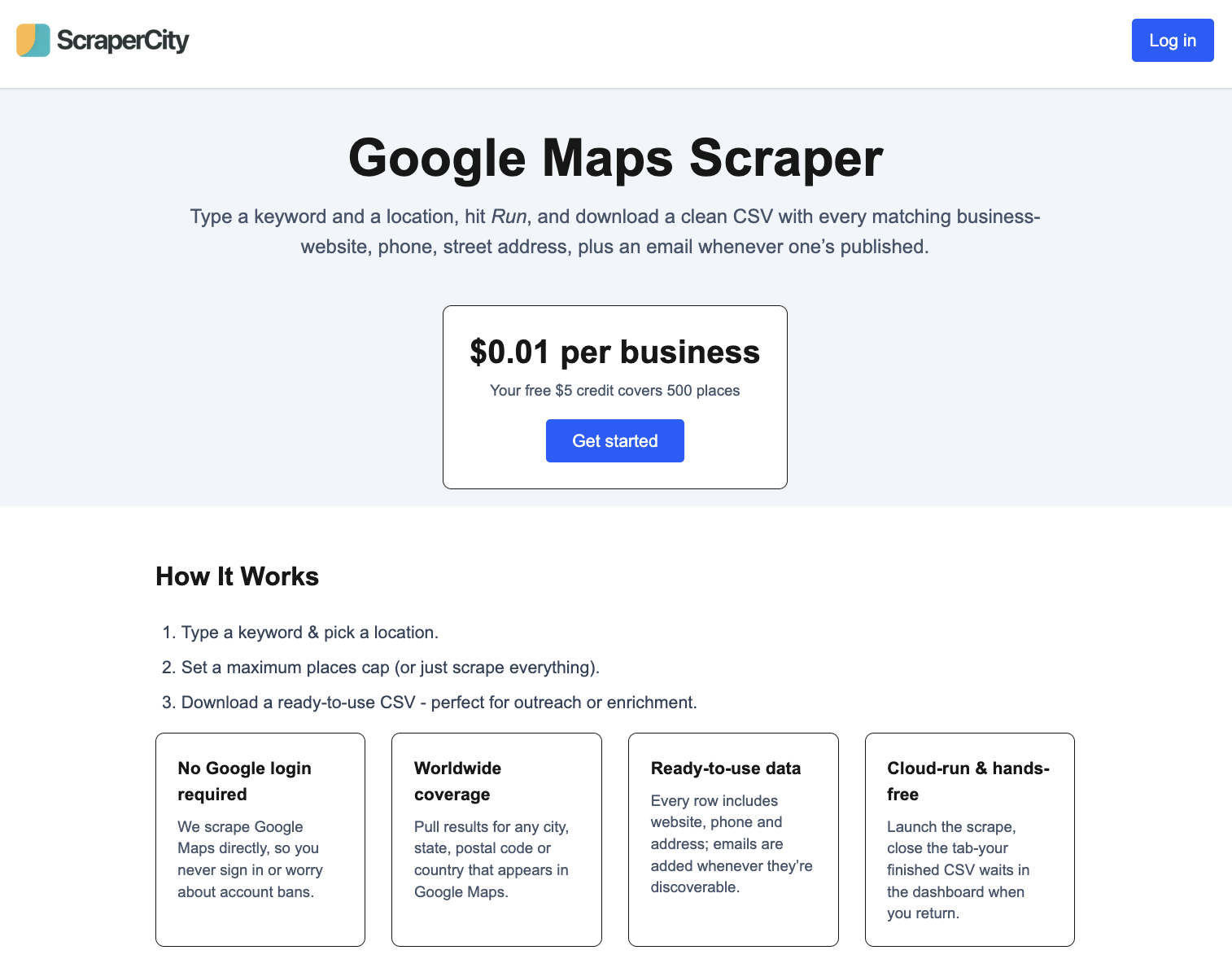

A powerful but underused strategy is keyword validation through scraped business categories. Each business listing includes a primary and secondary category that reflects how Google perceives that business. By analyzing this data through GMaps scraper by ScraperCity, agencies can identify which categories dominate certain areas and which are underrepresented. This insight helps marketers find keyword opportunities competitors are ignoring, improving local ranking potential.

3. Verifying NAP Consistency for Client Listings

Local SEO heavily depends on NAP consistency, the accuracy of name, address, and phone details across directories. By scraping listings, agencies can cross-check client details with what appears on Google Maps and other platforms. A location-based business data scraper helps automate this verification process, revealing duplicate listings, inconsistent names, or outdated phone numbers. Fixing these discrepancies boosts local credibility and helps businesses rank higher on search results.

4. Tracking Competitor Density in Target Areas

Understanding competition is critical for designing successful SEO campaigns. With scraped Google Maps data, agencies can visualize how many businesses in a particular category operate within a given region. This information highlights high-demand but low-competition zones, ideal for clients planning to expand or relocate. It also helps identify which businesses have strong online visibility versus those lagging behind. The result is a more informed, location-based SEO strategy.

5. Automating Citation Building Research

Citations are key to improving local SEO performance. However, researching citation opportunities manually is tedious. Using a digital marketing automation tool powered by Maps scraping, agencies can extract a list of businesses along with their URLs and check where they’re mentioned online. This automation accelerates citation research, ensuring clients get listed in more relevant and authoritative directories, a critical ranking factor for local search.

6. Measuring Local Authority and Engagement

Business ratings and reviews from Google Maps offer a goldmine of SEO signals. By scraping this data, agencies can analyze which competitors receive more engagement and identify patterns in customer feedback. These insights can influence SEO content strategy, reputation management efforts, and keyword prioritization. The richer the data, the stronger the optimization plan.

7. Supporting Multi-Location SEO Strategies

For brands managing multiple outlets, tracking performance across different cities can be complex. A Google Maps scraper can extract data for all branches, allowing agencies to benchmark visibility, review count, and consistency of business details across locations. This creates a unified performance snapshot that helps identify which branches need extra SEO support and where optimization is already paying off.

8. Enhancing Reporting with Data Visualization

After collecting data, turning it into insights is what sets top-performing agencies apart. Scraped data can be visualized using tools like Power BI or Google Data Studio to present trends and recommendations to clients. For instance, heatmaps showing competitor density or charts reflecting category share help clients understand their market position clearly. This not only improves decision-making but also strengthens client trust in data-driven SEO work.

9. Tips for Ethical SEO Data Collection

While automation saves time, it’s crucial to collect data responsibly. Always focus on publicly available business information and use scraping frequency controls to avoid server strain. Agencies that operate transparently build long-term credibility while still benefiting from automation efficiency. Responsible scraping aligns with ethical SEO practices, ensuring client data projects remain compliant and professional.

Conclusion

For agencies that specialize in local SEO, the Google Maps scraper has evolved into a strategic asset. It transforms raw location data into insights that improve outreach, keyword targeting, and competitive analysis. By integrating scraping with their optimization processes, marketers gain a real-time understanding of the local search environment. The outcome is smarter campaigns, higher rankings, and faster client growth, all driven by accurate, data-backed decision-making.

Leave a Reply